Google has expanded the scope of the “Live Search” feature to reach more than 200 countries and regions, after it had launched it to users of its application in the United States last September.

The feature allows the user to point the phone’s camera at any item or scene, then ask direct questions about what appears in front of him, to get immediate answers based on real-time visual analysis. The company revealed this feature for the first time during the Google I/O 2025 conference, before starting its gradual rollout.

The feature now relies on the Gemini 3.1 Flash model, which Google says delivers a faster and smoother experience, along with more natural conversations, and the model also supports multiple languages natively, expanding the usability of the feature globally without language barriers.

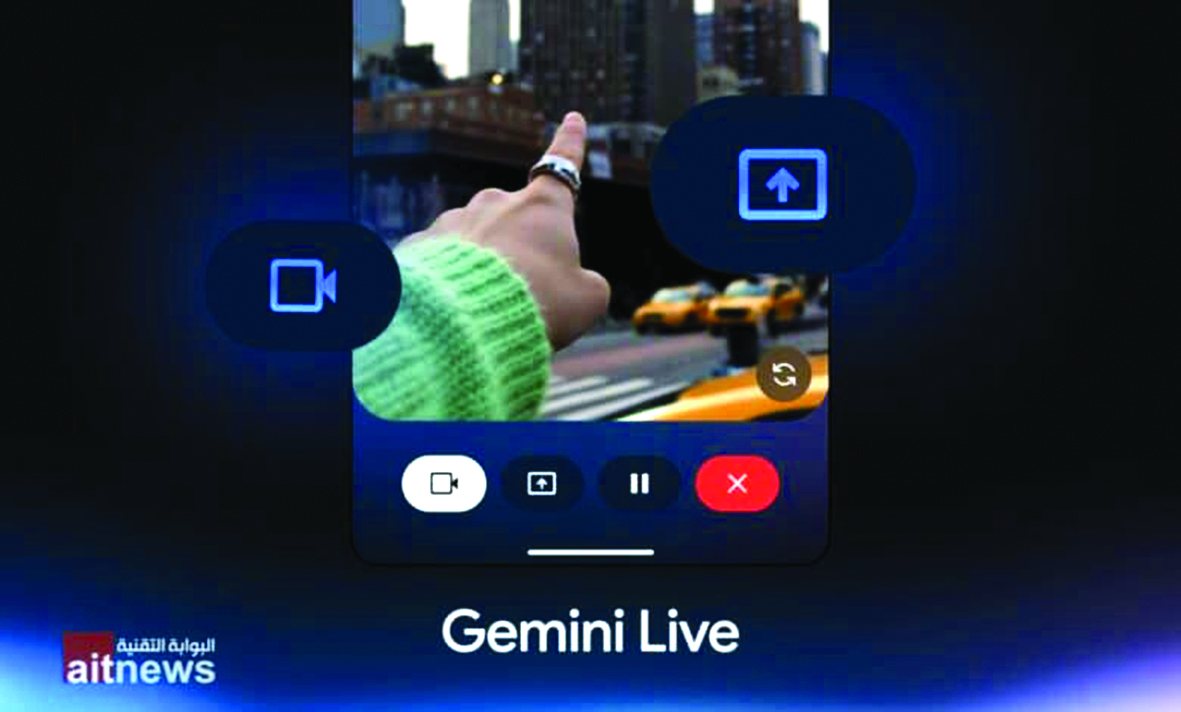

The feature can be accessed via the Google application on Android and iOS, through the “Live” button below the search bar, and it is also available within “Google Lens.” The “Live” option appears to start interacting directly with the video content.

The “Live Search” feature falls within a broader trend at Google to transform search into an interactive multimedia experience. It is not limited to analyzing images only, but rather relies on models capable of understanding text, audio, and video together in real time.

This integration enables practical usage scenarios, such as suggesting recipes based on ingredients the user sees or providing instant guidance on items in their surroundings.